Vehicles with automated driving capabilities envision making driving safer, more comfortable, and more efficient by assisting the driver or taking responsibilities for different driving tasks. While vehicles with assistance and partial automation capabilities are already in series production, the ultimate goal is to introduce vehicles with fully automated driving capabilities.

Reaching an advanced level of automation will require shifting all responsibilities, including the responsibility for the overall vehicle safety, from the human to the computer-based system responsible for the automated driving functionality. Such a shift makes the ADS (Automated Driving System) highly safety-critical, requiring a safety level comparable to an aircraft system. I will elaborate on this statement in the next paragraphs.

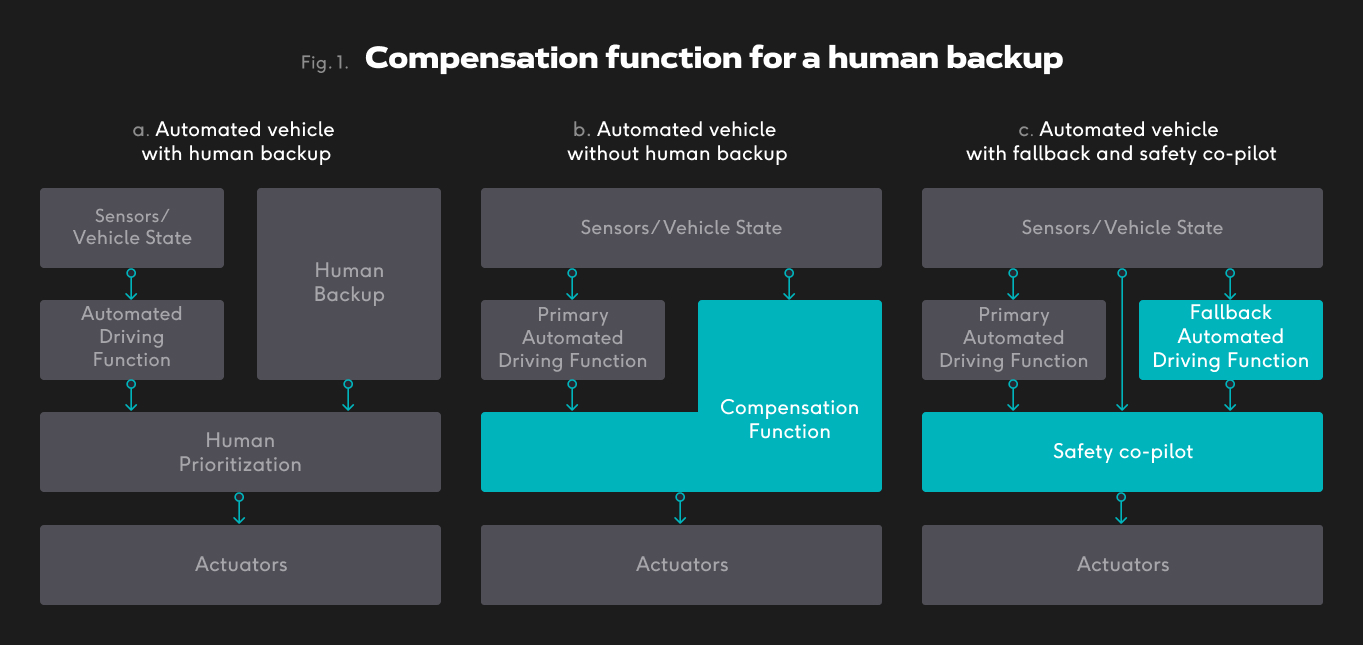

Every vehicle that implements automated driving with a human backup (SAE L1-L2 AD) can be characterized as follows (see Figure 1, left part). The vehicle perceives its environment and its state through sensors. The automated driving function calculates driving commands that it proposes to be executed by actuators based on the sensor inputs. In parallel to the automated driving function (ADF), the human backup perceives the environment and the vehicle’s state through human senses. The human backup is ready to interfere with the ADF at any time. The vehicle is designed so that the control exercised by the human backup is always and unconditionally prioritized over the driving commands from the ADF. Although humans may fail, human failure is socially accepted in the automotive domain.

Every vehicle that implements automated driving without a human backup (SAE L4-L5) must implement a Compensation Function that replaces the human driver (see Figure 1, middle part). In this case, we call the automated driving function the Primary ADF. The Compensation Function is a diverse digital replica of the Primary ADF with similar or degraded automated driving capabilities.

The prioritization of the Primary ADF and the Compensation Function is often based on fault-tolerance principles. The fault-tolerant computing origin traces back to John von Neumann in 1956, and has been extensively developed in aerospace, railway, nuclear, as well as in the automotive field. However, it is relatively immature, particularly when it comes to the automated driving (AD) domain. The AD introduces new problems in the design of fault-tolerant systems that previously have not been addressed. I will outline below some of these problems.

Unconditional prioritization

It is crucial to understand that the Compensation function cannot be unconditionally prioritized as a human driver is prioritized. The rationale for that is the Compensation Function can be in a failure simultaneously as the Primary ADF due to a common cause fault. Hence, safety measures as runtime monitoring of the Compensation Function, as well as diverse implementation of the Primary ADF and Compensation function. The latter is a great “engineering tool” for ensuring the absence of common cause faults, however, it opens new problems that I will elaborate next.

The problem of replica determinism

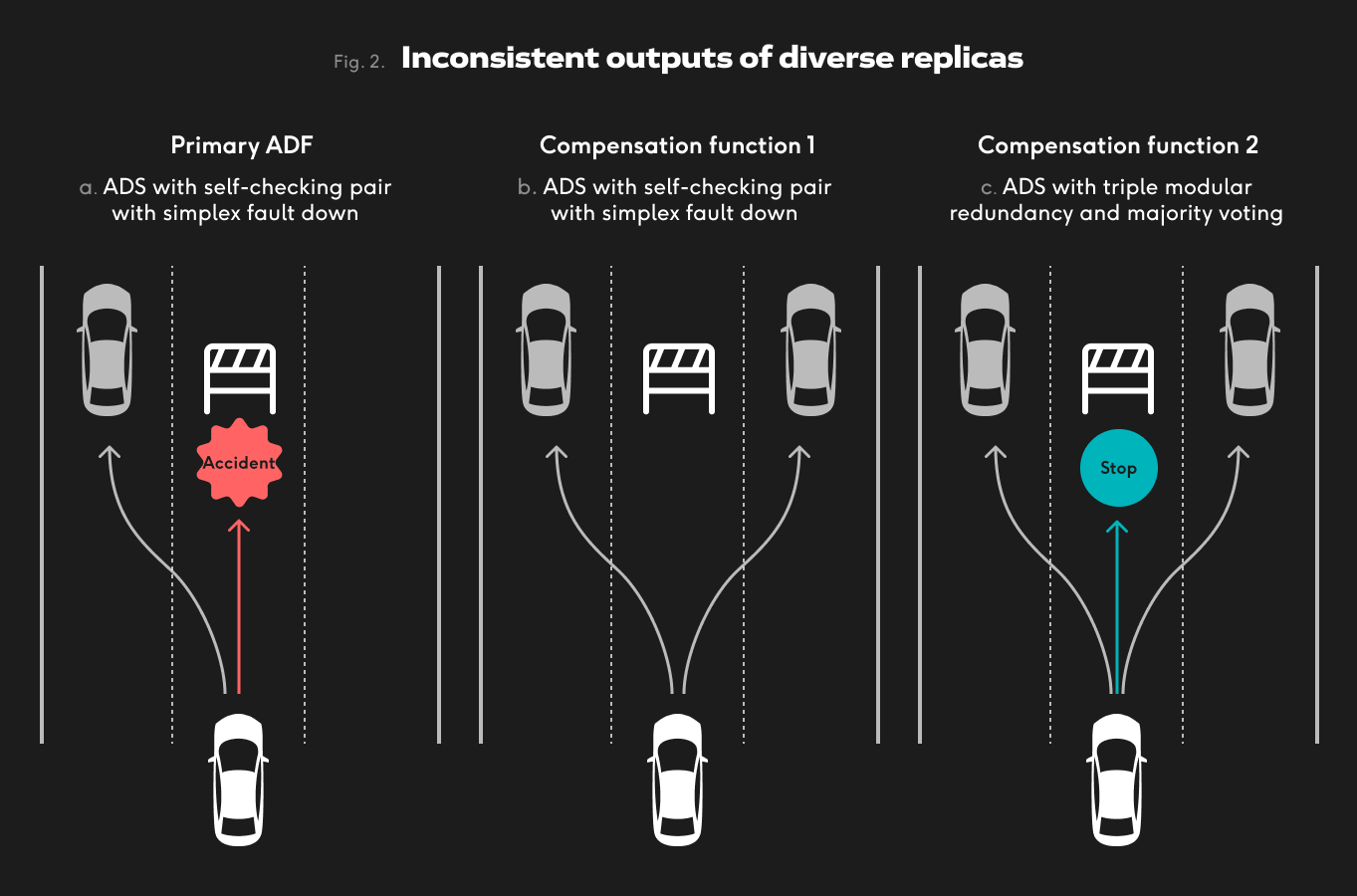

To avoid common cause faults, a diverse implementation of the primary automated driving function and the compensation function is required. A significant concern with diversity in fault-tolerant systems is the problem of replica indeterminism: when several diverse system versions are in use, then it is likely that the different versions will not produce the exact same output at the exact same time. On the contrary, even small differences in the system designs will result in differences in their output. In the AD application domain, differences in the Primary ADF and the Compensation Function will result in the generation of different paths (See Figure 2, middle part). Because of such inconsistent outputs, fault-tolerant approaches that count on divergence among the replica outputs, are very difficult to be applied.

Let’s look at the example given in Figure 2 b). In this case, the agreement between the primary ADF and the compensation function 1 will not be achieved. That is because both the primary ADF and the compensation function will generate two correct, but different driving trajectories. Such a scenario will lead to a false conclusion that the system is faulty (false positive). If either the primary or the compensation function is faulty, this approach will not be able to identify which one is the faulty function.

We are running into similar problems with the triple modular redundancy (TMR), and majority voting approach (see Figure 2, right): as all three (primary ADF, compensation function 1 and 2) generate different outputs (trajectories), no majority will be achieved. To cope with the inconsistent replica outputs, approximate agreement (for the self-checking pair) or inexact voting (for TMR) algorithms need to be deployed in the combination of these results. These algorithms, however, have their drawbacks. First, they reduce the fault-detection coverage. Second, these algorithms are extremely complex and hard to implement.

Proposed Solution

I propose a concrete architecture for a compensation function that satisfies the requirements for diverse implementation and freedom of interference between the ADF and the compensation function. The top-level view of the architecture is depicted on the right in Figure 1 and consists of three parts: a Primary ADF, a Fallback ADF and a so-called safety co-pilot (SCP). The fallback is a diverse digital replica that replaces the human backup and can control the vehicle with full or degraded functionality. The safety co-pilot’s functionality is to manage the coordinated operation of the Primary and Fallback ADFs and assure correct driving commands are sent to the actuators. For that, the safety co-pilot uses the understanding of how the system works to judge whether the Primary ADF operates correctly or not. Based on that judgement, the safety co-pilot prioritizes Primary or Fallback ADF output.

In particular, the Primary and Fallback ADF are in a hot-standby setup, which is the Primary being active and the Fallback running in parallel but passive. In the case where the SCP detects an incorrect operation of the Primary ADF, it will cause the vehicle to use driving commands from the Fallback ADF instead of ones from the Primary ADF. To address the problem of unconditionally prioritizing a faulty Fallback ADF, the SCP is also applying the same application-specific correctness checks to the Fallback ADF. As no comparison or voting algorithms are used, the problem of replica determinism is avoided. Last, the clear separation between the Primary and the Fallback ADF aids: (i) sufficient freedom from interference between the primary and the fallback ADF (ii) and independent multi-vendor and diverse development.

A detailed description of the Safety Co-Pilot, as well as what type of application-specific knowledge is used to detect ADS failures can be downloaded on The Autonomous website.